AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Airflow dag dependency11/27/2023 Trigger_transform_dag.set_downstream(trigger_load_dag) Trigger_extract_dag.set_downstream(trigger_transform_dag) Trigger_load_dag = TriggerDagRunOperator( Trigger_transform_dag = TriggerDagRunOperator( Trigger_extract_dag = TriggerDagRunOperator( from datetime import datetime, timedeltaįrom _operator import TriggerDagRunOperator ,īelow is an example of a DAG that will run every 5 minutes and trigger three more DAGs using TriggerDagRunOperator. In the output we see a huge dictionary with a lot of information about the current run:, 'inlets'. To look closer at the context object, we can print it out. In the example above, a function simply returns this object, i.e. In the controller function, if the dag_run_obj object is returned, the dag will be triggered. def conditionally_trigger(context, dag_run_obj): This condition can use the execution context passed to the function and can be quite complex. So you see all dag runs in just one page instead of digging into the airflow UI which seems very convenient for me.įor TriggerDagRunOperator we need a controller, a function that controls the start of the target DAG based on some condition.

The cool thing about this operator is that the DAG runs are saved in the history of these same DAGs as well as the logs.

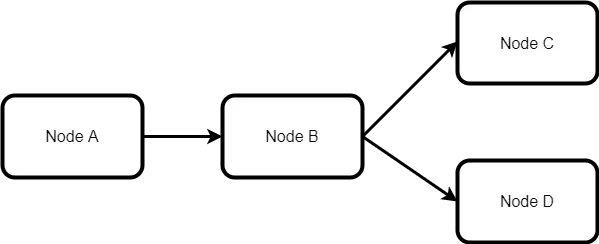

If this is not the case then they will still be triggered but will not be run - just stuck in the running state. It is necessary that the external DAGs are turned on. With this operator and external DAG identifiers, we can easily trigger them. TriggerDagRunOperator is an operator that can call external DAGs. But there are ways to achieve the same in Airflow. However, since they are not in the same DAG, we cannot do this. If those DAGs were tasks in the same DAG, we could just add those lines to the DAG file: t_downstream(transform) # Printing message at the logs and sleep for 2 secondsīash_command='echo "Extracting stuff from s3" sleep 2 ',īash_command='echo "Extracting stuff from jdbc" sleep 2 ',īash_command='echo "Transforming stuff from s3" sleep 2 ',īash_command='echo "Transforming stuff from jdbc" sleep 2 ',īash_command='echo "Loading stuff to s3" sleep 2 ',īash_command='echo "Loading stuff to hive" sleep 2 ', 'start_date': datetime.today() - timedelta(1), $ docker-compose -f docker-compose.yml up -dįrom airflow.operators import BashOperator To do this I will use this docker-compose file with Airflow, PostgreSQL pre-installed and LocalExecutor pre-configured. Let's imagine that we have an ETL process divided between 3 independent DAGs - extract, transform, and load.įor the example to be more illustrative, we need at least a Local executor so that more than one task can be run in parallel. In this post, we gonna discuss what options are available in Airflow for connecting dependent DAGs with each other.

I had exactly this problem - I had to connect two independent but logically connected DAGs. It may end up with a problem of incorporating different DAGs into one pipeline. They get split between different teams within a company for future implementation and support. It would be great to see Airflow or Apache separate Airflow-esque task dependency into its own microservice, as it could be expanded to provide dependency management across all of your systems, not just Airflow.Often Airflow DAGs become too big and complicated to understand.

Tasks with dependencies on this legacy replication service couldn’t use Task Sensors to check if their data is ready. While external services can GET Task Instances from Airflow, they unfortunately can’t POST them. However, what if the upstream dependency is outside of Airflow? For example, perhaps your company has a legacy service for replicating tables from microservices into a central analytics database, and you don’t plan on migrating it to Airflow. You could use this to ensure your Dashboards and Reports wait to run until the tables they query are ready. Even better, the Task Dependency Graph can be extended to downstream dependencies outside of Airflow! Airflow provides an experimental REST API, which other applications can use to check the status of tasks. The External Task Sensor is an obvious win from a data integrity perspective. Sql="SELECT * FROM table WHERE created_at_month = '`", # Run SQL in BigQuery and export results to a tableįrom _operator import BigQueryOperatorĭestination_dataset_table='',

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed